3D Cameras and Virtual Reality

Friday, May 6, 2016 at 11:33AM

Friday, May 6, 2016 at 11:33AM

This report is not a review of 3D cameras. It is rather an overview of the offerings in the 3D camera marketplace, as the backdrop for my own insights into this market. The devices shown are not meant to be comprehensive (impossible) but a breadth of examples to show the variety in the market.

3D content can be produced by a variety of methods. We will attempt to cover all the major bases here including cylindrical 3D, spherical 3D, stereoscopic 3D, and their hybrids, stereo-cylindrical and stereo-spherical. Each one of these also may have a variety of competing capture methods. Also, though scan-based 3D image capture is beyond the scope of the report, it will be touched upon briefly.

From this I will draw some conclusions about the market.

Over the last 18 months or so the market has been flooded with a whole variety of fist sized 3D cameras, many of them ball shaped. In the mass consumer market they are all going to fail.

When I see a new gadget and wish to gauge its potential success in the mass consumer market, I don’t just look at the need in the market, I ask myself, “How will a smartphone eat this?”

In the short run, new gadgets come along as standalone devices. They typically achieve mass market success if they are “pocketable,” and if so, they are only viable up until they are absorbed into the smartphone. Phone? Music Player? Digital Assistant/Day Planner? Camera? All now merely features or apps on our smartphones. Ball shaped 3D cameras are neither pocketable nor a form factor easily absorbed into a smartphone. They therefore fail my mass consumer adoption litmus test. How well they do or do not perform at their task, technologically speaking, is irrelevant. Sure, a couple of may find a niche user base, but as a mass market category, these are doomed.

The only winner I see in the personal 360º camera market is the Ricoh Theta. The Theta is a pocketable 3D camera that employs back-to-back fisheye lenses. It also benefits from being quite simple. I can see an easy path to absorption into the smartphone. I suspect we’ll see at least one handset come to market with this kind of configuration … but I do not think this will ultimate prove to be the lasting solution in the mass consumer 3D camera market, either.

Before I move on to a form factor that I do have confidence in, in the consumer market, I want to explore a few of the many other splendid variations in the 3D camera market.

The back-to-back fisheye lens solution of the Ricoh Theta is one we also see in the semi-pro and security camera market. Fisheye lenses are popular in security / surveillance applications because their wide field of view can cover large areas with fewer cameras (hard to hide). Some can see up to 180º, hence when placed back-to-back, can see in a full circle. The Kodak PixPro SP360 is used in such security applications, but also has an available back-to-back tripod mount accessory turning these professional grade cameras into a 360º system, with the assistance of stitching software. Nikon also makes a similar camera, the KeyMission 360, in one self contained unit.

If you have existing hardware, such as two SLR camera bodies, you may consider other fisheye lenses that can be configured using cameras mounted in pairs, providing you have the software to assemble the final panorama. Both the professional and consumer fish-eye lens market goes beyond the scope of this review, constituting a market of its own. Fish-eye lenses of a variety of makes and models are available for most cameras, including ones for use with smartphones.

If you have existing hardware, such as two SLR camera bodies, you may consider other fisheye lenses that can be configured using cameras mounted in pairs, providing you have the software to assemble the final panorama. Both the professional and consumer fish-eye lens market goes beyond the scope of this review, constituting a market of its own. Fish-eye lenses of a variety of makes and models are available for most cameras, including ones for use with smartphones.

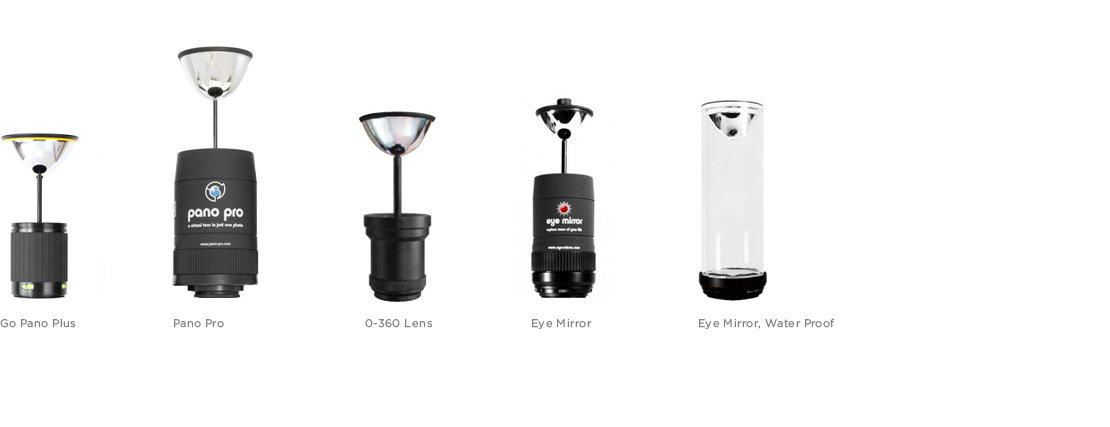

If you’re working with a single SLR camera body there is another solution available to you — convex mirror lens attachments that, with post processing, can produce 360º panoramas of acceptable quality that have the added benefit of no stitching required, eliminating the odds of a poor match between multiple lenses.

Mirror splitter lenses attach to an existing camera, as a modification to the optical path, creating stereoscopic output from a previously monoscopic, single sensor camera. Poppy was a popular crowd-funded project that not only turned the user’s iPhone into a stereoscopic camera, but on the reverse side is a stereoscopic 3D viewer.

Mirror splitter lenses attach to an existing camera, as a modification to the optical path, creating stereoscopic output from a previously monoscopic, single sensor camera. Poppy was a popular crowd-funded project that not only turned the user’s iPhone into a stereoscopic camera, but on the reverse side is a stereoscopic 3D viewer.

The GoPro HERO sports and hobby camera has an extensive ecosystem of accessories, many for 3D, as shown above. Though they’ve had their own stereoscopic rig available for years, they have mostly relied upon the third-party accessories market (and 3D printed accessories) to accommodate anything more complex. However, in recent weeks GoPro introduced the GoPro OMNI, a six camera rig strikingly similar to Freedom 360’s Explorer Plus.

The GoPro HERO sports and hobby camera has an extensive ecosystem of accessories, many for 3D, as shown above. Though they’ve had their own stereoscopic rig available for years, they have mostly relied upon the third-party accessories market (and 3D printed accessories) to accommodate anything more complex. However, in recent weeks GoPro introduced the GoPro OMNI, a six camera rig strikingly similar to Freedom 360’s Explorer Plus.

In the pro-sumer and professional markets, there are many dedicated 3D camera products and configurations.

If the goal is not just 360º photography / videography, but something usable in Virtual Reality, you will need to understand a few terms like stereoscopy, parallax, and pupillary-distance. In our context, stereoscopy refers to imagery that has two camera views, one for each eye, as humans do. Stereoscopic vision allows us to see the world from two slightly different perspectives. The difference between these perspectives is known as the parallax effect. It creates our perception of depth. If you place an object close to your face and open-and-close your eyes, one at a time, the object may seem to jiggle, as each eyes views it from a different angle.

The scale that objects appear relative to the viewer is determined by pupillary-distance, or more crudely stated, the distance between one’s eyes. Average pupillary-distance for an adult humans is about 60mm. Therefore a 3D cinematographer who wishes to place a viewer into a scene will have two camera lens, placed ~60mm apart at center, and ~165cm above the floor-plane. On the other hand, if she wishes to give a “god’s eye view,” she may shoot stereoscopically with two cameras placed very far apart. Relative to the human pupillary-distance, this will make the objects appear small, or conversely, make the scene appears as viewed through the eyes of a giant — an effect that might play very well, for instance, when experiencing a sporting event from above. In the opposite extreme, if stereoscopic pairs of cameras are placed very close together objects in the scene will appear large, as if the viewer themselves are very small, like a rodent’s eye view, or an insect’s eye view, etc.

Stereoscopy can be achieved optically, or algorithmically.

For videography that is shot with a fish-eye lens, a convex mirror, or a ball of lenses, and algorithmically stitched together into a cylinder or sphere, one can artificially generate two vantage points from the available image data, and approximate stereoscopic vision of various pupillary-distances, though with each post-production treatment the image quality is going to experience some degree of distortion / degradation.

On the other hand, if the stereoscopic effect is generated from the original source, limited by the quality of the cameras used, the end result should be of higher quality.

From the collection above, the Vuze Camera, the Samsung Project Beyond camera, and others like them will see adoption for amateur sports and other events. The higher end Nokia OZO and Jaunt ONE can be expected to compete in the same space, at the higher end of the same, for live streaming VR sporting and events.

These are also excellent devices for virtual tours including real estate, both commercial and residential; and virtual tourism, both marketing for a destination as well as virtual tourism as an end unto itself.

The use of 360° video in cinematic productions will not become a thing.

Stereoscopic? Yes. 360°? No.

I stand by this position for multiple reasons.

The director’s role is to “frame the narrative.” Directors wish to keep this control. Viewers want them to have it.

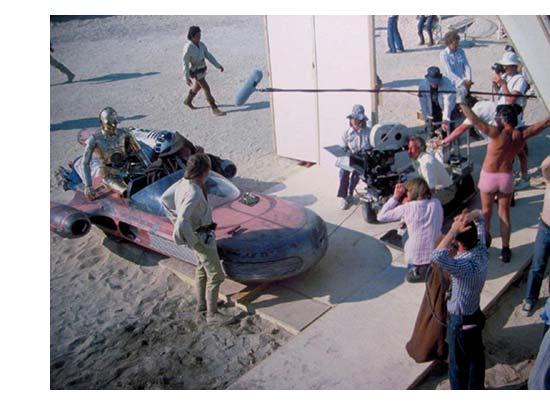

On set at a live shoot, “out of frame” is where infrastructure is hidden. Viewers willfully suspend disbelief, not mindful that just out of view stand lighting and mic-ing equipment, people holding reflector boards, a dolly moving the camera, many assistants and various specialists — all of this hidden from the camera. So in cinematic production, the director wants to frame a narrative, the viewer wants a reclining experience (don’t make me work), and when you factor in the production cost, the economics for 360° in cinema do not work. Stereoscopic on the other hand …

On set at a live shoot, “out of frame” is where infrastructure is hidden. Viewers willfully suspend disbelief, not mindful that just out of view stand lighting and mic-ing equipment, people holding reflector boards, a dolly moving the camera, many assistants and various specialists — all of this hidden from the camera. So in cinematic production, the director wants to frame a narrative, the viewer wants a reclining experience (don’t make me work), and when you factor in the production cost, the economics for 360° in cinema do not work. Stereoscopic on the other hand …

I predict that in cinematic VR production, a popular format will emerge: a narrow field of view, stereoscopic (20° wider than a given VR device’s FOV, perhaps). In this manner, the viewer-experience will not be a completely locked vantage point. The production is then able to create the sensation of presence (things like the subtle perspective changes caused by one’s own breathing, for instance), but keeping a narrow crop, so the director is still in control of the narrative. The viewer will experience the illusion that they can look all about, but are really just a wide-angle stereoscopic view. This is my prediction.

I would like to add, right now Virtual Reality is dominated by Silicon Valley, hence content creation is dominated by the console gaming industry. There is a desire to project game-dev thinking into virtual reality cinema. I do believe that VR movies are coming (and excellent VR gaming experiences will get here first), but I’m going on the record to say that the most widely adopted format for cinematic productions in VR will not ultimately be full 360° field of view.

When working in 3D, there are other capture tools besides cameras. Light field technology, and laser based 3D scanners offer other input methods, particularly at the higher end of the market. Heavy industry, land surveying, architecture / engineering, and the movie special effects industry are all already employing scanning technology. While this goes slightly beyond the scope of the overview, expect this field to become more crowded as well.

Forget 360° for a moment, stereoscopic is where the tech industry will soon refocus its energy.

For decades stereoscopic video has been used in robotics research where, like the eyes in our head, it is used for depth perception. As 3D vision and other depth sensors become mainstream technologies, these specialized companies will either focus on their other product lines, focus on vision software, or go out of business (as Videre already has).

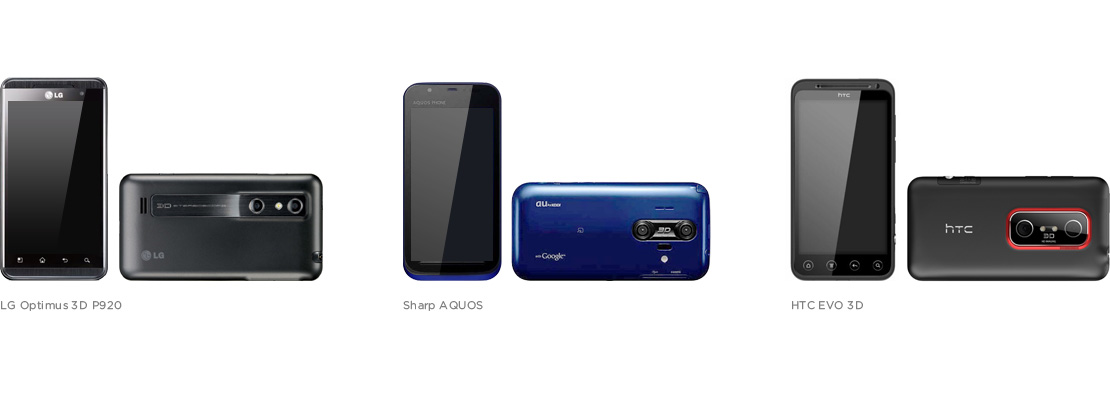

Five years ago, for CES 2011, stereoscopic cameras were a thing. Many were introduced. Most are no longer available. The trend peaked prematurely. The problem in 2011 was that there were no good 3D content consumption devices. You could take stereoscopic 3D images and video, but there were very few ways to view it. In 2011 there were military-grade VR headsets, and personal viewers that were little more than glorified Victorian era stereoscopes.

What a difference five years makes (in tech).

This time will be different — today, with many low cost HUDs on the market, adding stereoscopic photography to smartphones will complete the virtuous loop of content creation and content consumption. In the user-generated-content space, I expect to see short clips, Vine video like VR moments, perfect for handheld viewers like Google Cardboard, Viewmaster VR, and Goggle-Tech.

Bringing this review full circle, I am projecting that the stereoscopic camera smartphone will make a comeback … updated. Firstly, the lenses need to be placed at the proper pupillary-distance of aprox. 60mm, to match human scale. There will also be additional depth perception sensors added.

I believe quite strongly that this is the form factor that Apple will be using in near future iPhone updates, if not 2016, then likely by 2017. There are many reasons to believe this will be the case. Two years ago Apple purchased 3D sensor firm, PrimeSense, the company whose depth sensor technology was licensed by Microsoft for use in their Kinect. Apple has thus far stayed mum, and their technology has yet to appear in an Apple product. Last year Apple acquired LinX, holder of IP for two lens camera systems. No product has yet come to market using LinX two lens technology. Then early this year, Apple acquired FlyBy Media, responsible for the software licensed by Google for their multi-camera and depth sensor based 3D mapping (SLaM) project known as Tango. Apple now owns all the technology, both hardware and software, to build just such a device. Add to this my first requirement for consumer market success: Stereoscopic 3D is an easily pocketable form-factor with a clear path to being eaten by a smartphone.

I also believe that an Android, in a limited production run, may come to market first. Sony’s CTO doesn’t believe Apple can implement LinX technology in a 2016 iPhone due to a supply chain bottleneck, but an Android manufacturer producing a limited edition model could move faster.

This article can be downloaded as a PDF deck.